In the realm of verification, coverage is essential in ensuring the closure on the functionality of complex systems. However, as systems become more intricate, achieving high test coverage can be a daunting challenge. There will be several manual iterations, reviews and oftentimes redoing some aspects of the bench to achieve higher coverage number. So I was asking a question to myself. Can we do coverage in an different way?

Not so long after I asked that question. I stumbled up on a class of algorithms called heuristic algorithms, meaning they may not give you an accurate answer but rather provide near optimal answer to an optimization question. One such algorithm I studied recently is known as genetic algorithm. Inspired by the principles of natural selection, this algorithm can optimize computational problems.

I wondered, can these algorithms be used for coverage problem in verification. In this article, I tried to explore on how these algorithms can be used for a general optimization problem with an example and then float an idea that the same can potentially be used for coverage improvement.

Setting up background

Natural selection is a mechanism that allows living organisms to adapt and change. It is a key mechanism in evolution. Species evolve to be better over multiple generations by means of strong inheritable traits and random mutations. Inspired from this theory of evolution in nature, a class of algorithms called evolutionary algorithms were created and are used to solve a computation optimization problems.

Genetic algorithm is one such algorithm to mimic the natural selection to come up with an optimized solution. Let us understand this algorithm with an example. The basic outline of the algorithm works like this.

-

- Start with initial population

- Define a criteria for fitness for each individual. This criteria is what we are trying to optimize.

- Evaluate the fitness for the entire population

- Select a subset of fittest individuals from the population called parents

- Perform crossover and mutation of parents to generate off-springs

- Off-springs becomes the new population

- Repeat this process for several iterations called generations

- Each generation will yield better fitness scores

Now that we know basic outline of the algorithm let us take a simple and hypothetical problem. Suppose we want to find a 32 bit number (say integer) that contains maximum number transitions in it. A transition is counted when two consecutive bits in the number are 10 or 01. If the number is 1011 then there are 2 transitions in the number.

Obviously the 32 bit number that we are looking for are : 10101010…. and 01010101…. we know these numbers have maximum number of transitions but we want the algorithm to find closest solution. We will develop a program based on genetic algorithm in system verilog to find out the numbers with high number of transitions.

The idea is start with a random set of numbers and let algorithm evolve those numbers to reach higher transition counts.

Planning the program

I want to divide the program into following routines.

-

- initializePopulation(): This function initializes the population and parents. The terms parents and population are nothing but different 32 bit number in the program.

- countTransitions(): A helper function that counts the number of bit transitions (0 to 1 or 1 to 0) in a given 32-bit integer.

- findFitness(): This function calculates the fitness of each offspring numbers based on the number of transitions in its value, using the countTransitions() function.

- findParents(): It sorts the offspring numbers in descending order of fitness and selects the top 5 to become the new parents for the next generation.

- nextGeneration(): This function creates a new generation of offspring by performing crossover and mutation operations on the current parents.

Implementing the algorithm

I have implemented a very basic version of the algorithm by swapping two random slices in two number to form new number called crossover and randomly toggling bits from 0 to 1 or 1 to 0 called mutation. Both these processes will allow us to generate more numbers (Offsprings) from selected numbers (parents). We repeat this process over multiple generations as shown in the code below.

Genetic Algorithm

class GeneticAlgo ;

int unsigned population[];

int unsigned parents[];

int unsigned numParents = 5;

int unsigned numOffSprings = 15;

int unsigned maxPopulationRange = 100000;

typedef struct {

int unsigned value;

int unsigned fitness;

} OffSpring;

OffSpring offSprings[];

function void initializePopulation();

population = new[numParents];

parents = new[numParents];

offSprings = new[numOffSprings];

foreach( population[i] ) begin

population[i] = $urandom_range(0, maxPopulationRange);

parents[i] = population[i];

end

$display("Initial population ready: %p", parents);

endfunction : initializePopulation

function int countTransitions( int unsigned offSpring );

int transitionCount = 0;

for( int i = 1; i < 32 ;i++ ) begin

if( offSpring[i] != offSpring[i-1]) begin

transitionCount++;

end

end

return transitionCount;

endfunction : countTransitions

function void findFitness();

foreach( offSprings[i] ) begin

offSprings[i].fitness = countTransitions( offSprings[i].value );

end

$display("=============");

$display("Generated Offsprings");

$display("=============");

foreach( offSprings[i])

$display("The number is %b (number of transitions: %d)", offSprings[i].value, offSprings[i].fitness);

$display("=============");

endfunction : findFitness

function void findParents();

offSprings.rsort() with ( item.fitness );

for( int i = 0 ; i < numParents ;i++) begin

parents[i] = offSprings[i].value;

end

$display("=============");

$display("Survived Parents for next generation");

$display("=============");

for( int i = 0 ; i < numParents ;i++)

$display("The number is %b (number of transitions: %d)", offSprings[i].value, offSprings[i].fitness);

$display("=============");

endfunction : findParents

function void nextGeneration();

int parent1, parent2, min, max, mutate;

int choice;

int unsigned temp, temp1, temp2;

int offSpringCount = 0;

repeat(3) begin

parent1 = $urandom_range(0,numParents-1);

do begin

parent2 = $urandom_range(0,numParents-1);

end while(parent2 == parent1 );

repeat(5) begin

min = $urandom_range(0,31);

max = $urandom_range(min,31);

temp = parents[parent1];

temp1 = parents[parent1];

temp2 = parents[parent2];

for( int j = min; j <= max ;j++) begin : crossover

temp1[j] = temp2[j];

temp2[j] = temp[j];

end

if( temp1 == temp2 ) begin : mutation

mutate = $urandom_range(0,31);

temp1[mutate] = !temp1[mutate];

end

choice = $urandom_range(0,1) ;

if( choice ) begin : choose_parent

offSprings[offSpringCount++].value = temp1;

end else begin

offSprings[offSpringCount++].value = temp2;

end

end

end

endfunction : nextGeneration

endclass : GeneticAlgo

module top;

GeneticAlgo geneticAlgo;

initial begin

int unsigned numGenerations = 50;

geneticAlgo = new();

geneticAlgo.initializePopulation();

repeat(numGenerations) begin

geneticAlgo.nextGeneration();

geneticAlgo.findFitness();

geneticAlgo.findParents();

end

end

initial begin

#400ns;

$finish();

end

endmodule : topResults after 50 generations

Generated Offsprings ============= The number is 01011010101100100101011010100101 (number of transitions: 25) The number is 01001010101100000101011010100101 (number of transitions: 23) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 00001010101100100101011010100101 (number of transitions: 23) The number is 01001010001100100101011010100101 (number of transitions: 23) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001011101100100101011010100101 (number of transitions: 23) The number is 01001010101100100101011010100101 (number of transitions: 25) ============= ============= Survived Parents for next generation ============= The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) The number is 01001010101100100101011010100101 (number of transitions: 25) =============

Conclusion

I am very surprised to see the results. After 50 generations the solutions with more than 27 transitions started to emerge and are coming close to the actual solution which have 31 transitions.

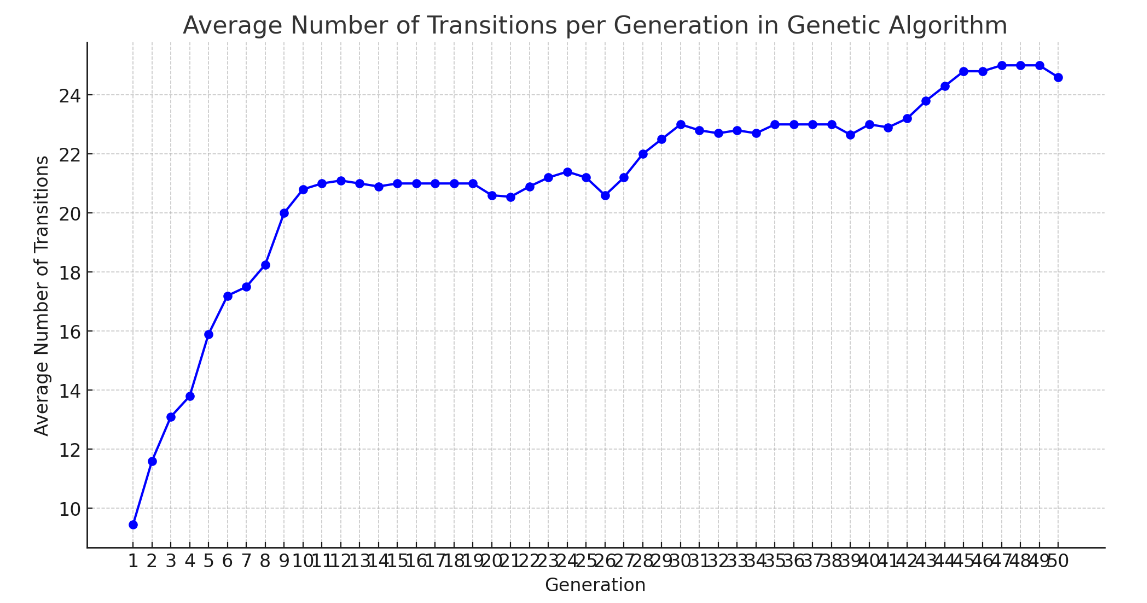

If the program is observed carefully, we never told the program on how to come up the with these numbers. We are simply mutating the numbers randomly and yet the the program evolved over multiple generations by selecting the best numbers and purging weak numbers for next generation. I plotted the average transitions of all off-springs in each generation.

I was very happy and exited to see the plot. Now coming back to the question that I asked at the beginning of the article. Can we do coverage in an different way?. Maybe.

Imagine a test bench when run will start with initial stimulus/configuration and gather coverage (which is the fitness criteria in this case), Iterate multiple generations to streamline its stimulus/configuration by randomly mutating/crossing and come up with maximum amount of coverage. We can come back to it after couple of days to witness high numbers of coverage without even lifting a finger. Wouldn’t that be wonderful.

Am I being too greedy?

Hello! Someone in my Myspace group shared this site with us so I came to take a look. I’m definitely loving the information. I’m book-marking and will be tweeting this to my followers! Superb blog and amazing design.