Introduction: The UVM Test End Problem

If you are new to UVM objections, it is very easy to file them away as one more pair of APIs to memorize:

phase.raise_objection(this);

...

phase.drop_objection(this);That is technically correct, but it misses the real reason objections exist.

In a UVM testbench, the difficult part is usually not starting activity. Starting activity is easy. A test can launch sequences, drivers can consume items, monitors can sample interfaces, scoreboards can compare expected and actual behavior, and coverage can tick along in the background. The real problem is deciding when all of that work is actually finished. In practice, those things do not stop at the same instant. Stimulus may finish first, while responses are still coming back from the DUT and the scoreboard is still draining its final comparisons.

That is the problem objections solve. They are UVM’s runtime coordination mechanism for phase lifetime, especially for run_phase.

Without that coordination, the test can end at exactly the wrong moment. I have seen this many times in real environments: the last sequence item is launched, the test thread returns, and simulation ends while the DUT is still producing a valid response a few cycles later. Sometimes the monitor has already observed it but the scoreboard has not processed it yet. Sometimes the DUT tail latency only shows up in certain traffic patterns, so the issue appears intermittent and wastes a lot of debug time.

So the right mental model is simple. An objection is not “just something you call in run_phase.” It is a shared way for the testbench to say, “do not let this phase end yet, there is still meaningful verification work in flight.”

In this article, I will walk through how objections work, why they are mostly associated with task phases, where engineers usually get into trouble, and how I prefer to use them in environments that need to be debugged and maintained by more than one person. We will also talk about drain time, scoreboard-aware completion, and the practical debug switches that help when a phase hangs or ends too early.

What Are UVM Objections?

At the conceptual level, a UVM objection is just a hold request on a phase. When some part of the testbench raises an objection, it is telling UVM to keep the current phase alive. When it drops that objection, it is saying its part of the phase work is complete.

You can think of the two calls this way:

raise_objection()means “keep the phase running”drop_objection()means “my contribution to keeping it alive is done”

Under the hood, each phase maintains objection state. Every raise increments the count. Every drop decrements it. A phase only becomes eligible to complete when that objection count reaches zero and any configured end-of-phase behavior has also been honored.

That is why balance matters so much. If something raises and never drops, the phase can sit there forever until a timeout kills the run. If something drops too early, the phase can complete while useful work is still happening in parallel.

You can technically involve many UVM objects in objection handling, but in practice that freedom is where people often make life harder for themselves. The test can do it. A root sequence can do it. Sometimes a scoreboard or some completion manager does it. All of those are legal. Not all of them are equally maintainable.

My general advice is to keep objection ownership high in the hierarchy unless you have a very good reason to distribute it. The more places that can independently keep the phase alive, the harder it becomes to answer a simple debug question: who is still holding the simulation open?

Why Objections Are Needed in UVM Testbenches

The most common use case is protecting run_phase from ending too early.

That matters because run_phase is where actual timed behavior happens. Unlike build_phase or connect_phase, it is full of waits, clock edges, protocol handshakes, and concurrent threads. Your sequence may finish generating transactions before the driver has finished sending them. The driver may finish before the DUT has responded. The DUT may respond before the monitor and scoreboard have fully processed the last transfer. None of that is unusual. In fact, that is normal in any realistic environment.

Objections give all of that concurrent activity a common phase-level stop condition. They do not tell you whether verification is complete, but they do provide the mechanism to prevent UVM from ending the phase while your completion logic is still waiting.

They also support reuse. A top-level test can own the objection policy. A reusable root sequence can optionally own its own lifetime. A scoreboard can participate if the environment is intentionally structured that way. That flexibility is useful, but it comes with a tradeoff: flexibility without discipline turns into hidden ownership, and hidden ownership is one of the fastest ways to make end-of-test debug painful.

How the UVM Objection Mechanism Works

When you call raise_objection(), you increment the objection count for the current phase. In most code bases, you will see something like this:

phase.raise_objection(this, "Starting stimulus");The first argument identifies the source object. The optional string is not decoration. Use it. When you are tracing objection behavior in a large regression, that message often tells you immediately whether the raise is expected or whether some component is holding the phase longer than intended.

Dropping the objection is the inverse operation:

phase.drop_objection(this, "Stimulus completed");At that point, that particular source is no longer asking UVM to keep the phase alive.

Once the total objection count reaches zero, the phase becomes ready to end. That does not always mean it ends in the same delta cycle because drain time and some phase machinery may still apply, but conceptually that is the trigger. If nobody is objecting anymore, the phase is allowed to move on.

There is hierarchical handling involved inside UVM, but most engineers do not need to know every implementation detail to use objections correctly. The practical rule is enough: objections contribute to a shared phase-level decision, not just a local component decision.

Objections in the UVM Phasing Model

When people say “UVM objections,” they almost always mean objections used in run_phase or another task phase.

That distinction matters. Function phases such as build_phase, connect_phase, and end_of_elaboration_phase do not advance simulation time the way task phases do. They are not where end-of-test control usually lives. By contrast, task phases can wait on clocks, fork threads, and interact with the DUT over time. That is where objection control becomes relevant.

This is also where drain time enters the picture. UVM allows a phase to delay completion briefly after the objection count reaches zero. The classic example looks like this:

phase.phase_done.set_drain_time(this, 50ns);Drain time can be useful when there is a known, bounded tail after stimulus completes. For example, maybe the final monitored packet always appears within a fixed number of cycles after the last item is driven. In that case, adding a small cushion may be reasonable.

But this is one of those places where experience matters. Drain time is often misused as a substitute for proper completion logic. If your scoreboard must confirm that all expected transactions were checked, then a guessed delay is not a real end condition. It is just a hopeful pause. Hope is not a verification strategy. Use drain time as a small cushion when the tail is genuinely bounded and understood. Do not use it to hide the fact that the environment does not really know when it is done.

Basic Usage Pattern

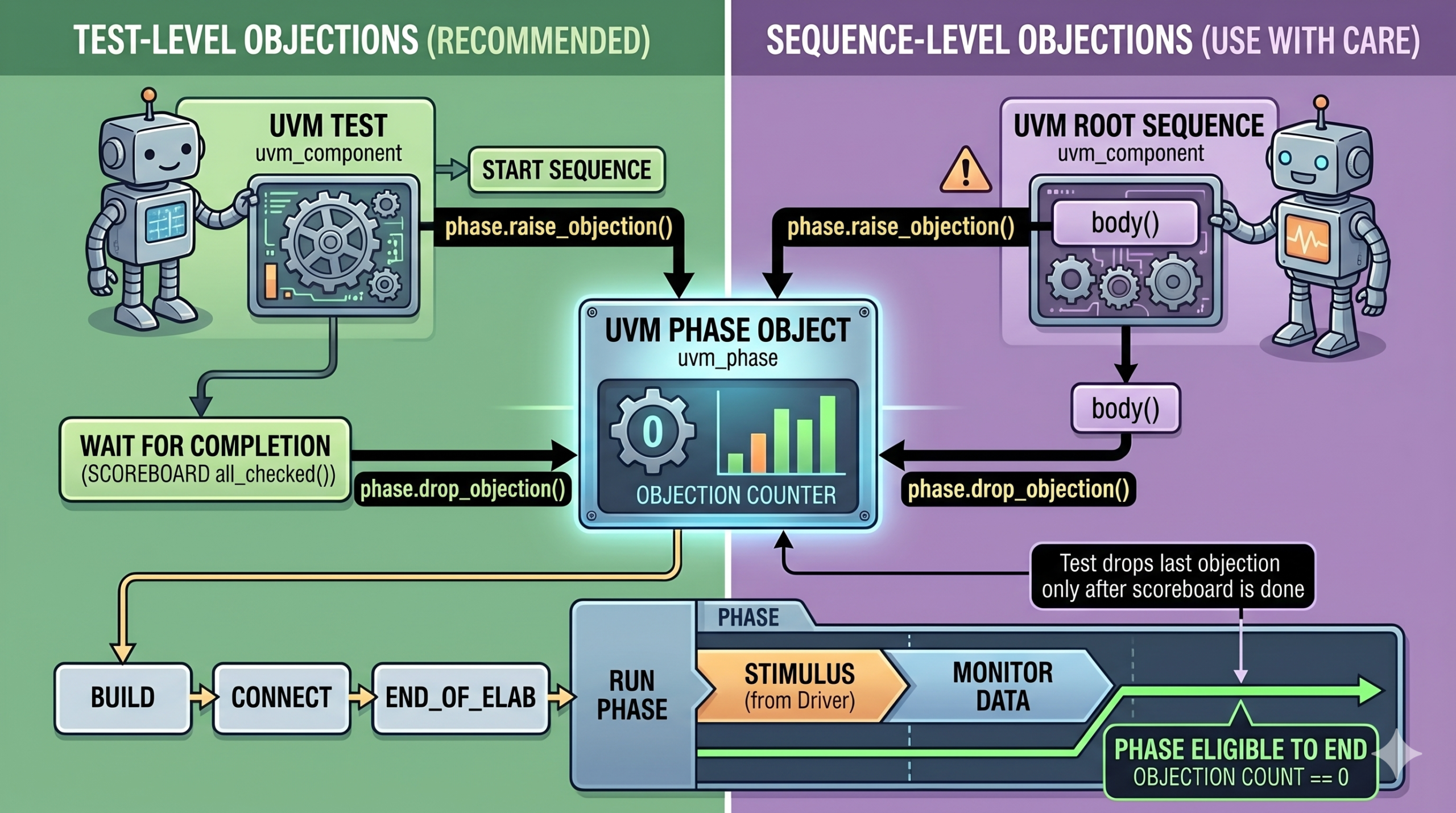

The simplest objection pattern is still the best one in many environments: let the test own the objection lifecycle.

The test raises an objection before meaningful activity starts, launches the scenario, waits for explicit completion criteria, and only then drops the objection. That keeps end-of-test ownership in one obvious place.

A sequence can also manage its own objections, usually in pre_body() and post_body(). That can work well for a genuine top-level sequence that owns the scenario. I have used that style before, but I only like it when the team is disciplined about how root sequences are started. The gotcha is starting_phase. If the sequence is not started with the right phase association, starting_phase may be null, and your carefully written objection code quietly does nothing. That is the sort of thing that confuses junior engineers because the code looks correct and still fails to protect the phase.

So if you are choosing a default style, my recommendation is straightforward: start with test-level objections. Move objection ownership into a sequence only when that choice is intentional and understood by the whole environment.

Code Walkthrough: Minimal UVM Objection Example

Here is the basic pattern I recommend most often. The test owns the objection and ties the drop to a real completion condition.

class my_test extends uvm_test;

`uvm_component_utils(my_test)

my_env env;

function new(string name = "my_test", uvm_component parent = null);

super.new(name, parent);

endfunction

function void build_phase(uvm_phase phase);

super.build_phase(phase);

env = my_env::type_id::create("env", this);

endfunction

task run_phase(uvm_phase phase);

my_seq seq;

phase.raise_objection(this, "Starting stimulus");

seq = my_seq::type_id::create("seq");

seq.start(env.agent.sequencer);

wait (env.scoreboard.all_checked());

phase.drop_objection(this, "Stimulus and checking completed");

endtask

endclassWhat I like about this pattern is not just that it works. It makes the end-of-test policy visible. The objection is not dropped because the sequence returned. It is dropped because the environment has reached an explicit completion condition, all_checked(). That is a much healthier contract.

Yes, the test now needs visibility into scoreboard completion. In my view, that is usually a good tradeoff. If the scoreboard defines the end-of-test truth, the test should be able to ask for that truth directly.

Example sequence controlling its own objection

class my_seq extends uvm_sequence #(my_item);

`uvm_object_utils(my_seq)

function new(string name = "my_seq");

super.new(name);

endfunction

task pre_body();

if (starting_phase != null)

starting_phase.raise_objection(this, "Sequence starting");

endtask

task body();

my_item req;

repeat (10) begin

req = my_item::type_id::create("req");

start_item(req);

assert(req.randomize());

finish_item(req);

end

endtask

task post_body();

if (starting_phase != null)

starting_phase.drop_objection(this, "Sequence completed");

endtask

endclassThis style makes the sequence more self-contained, and there are environments where that is exactly what you want. But the caveat around starting_phase is not minor. It is the main thing that trips people up. If the sequence is started explicitly and no phase context is attached, starting_phase is null, and the objection path is effectively disabled.

That is why I do not like casual sequence-level objections in nested or reusable traffic sequences. If the sequence is truly a root scenario object, fine. If it is just one piece of larger stimulus, I would rather keep the objection policy above it.

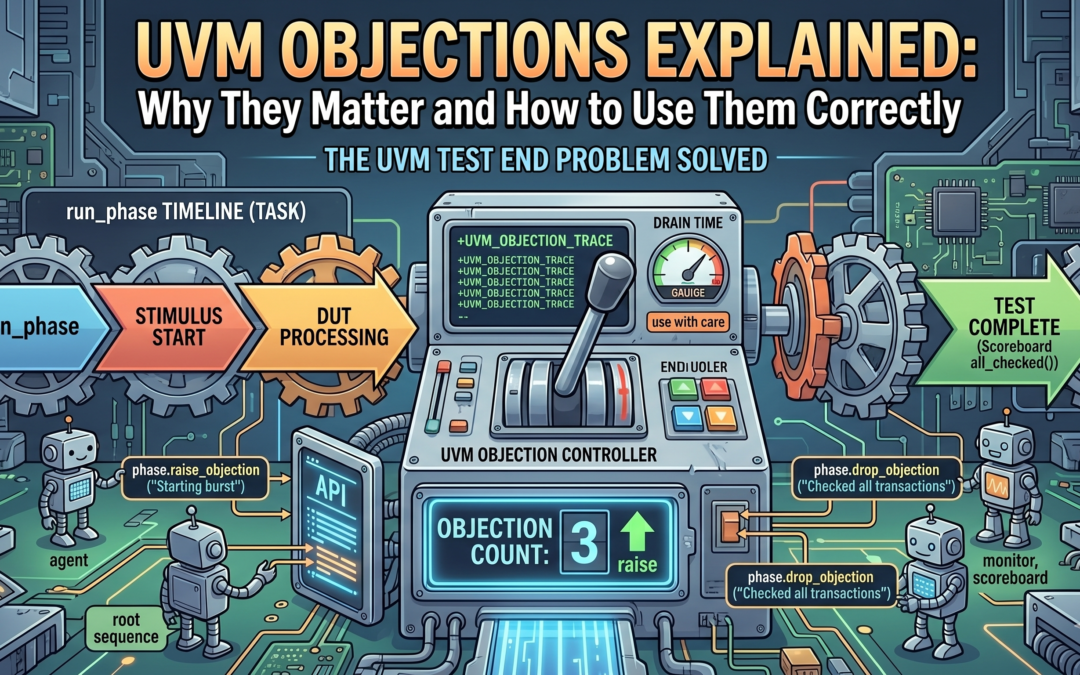

Simulation timeline: when the test starts, waits, and ends

In both patterns, the timing story is the same. Raise before meaningful work begins. Keep the phase alive while stimulus, DUT processing, monitoring, and checking are happening. Drop only when the owner knows the work is actually complete.

That is really all objections are doing. They are not magical. They are just phase lifetime control.

Objections and End-of-Test Control

This is where many engineers confuse “phase control” with “verification completion.” They are related, but they are not the same thing.

An objection does not know whether your scoreboard is done. It does not know whether all expected responses returned. It does not know whether a packet is still stuck in a DUT pipeline somewhere. It only knows whether some object is still asking the phase to stay alive.

So the right pattern is almost always an objection plus an explicit completion condition.

Here is a more realistic end-of-test example:

task run_phase(uvm_phase phase);

my_seq seq;

phase.raise_objection(this, "Starting end-to-end scenario");

seq = my_seq::type_id::create("seq");

seq.start(env.agent.sequencer);

wait (env.scoreboard.expected_count == env.scoreboard.actual_count);

wait (env.scoreboard.no_outstanding_transactions());

phase.drop_objection(this, "All expected transactions checked");

endtaskThis is the kind of code that saves you from tail bugs. A sequence returning is not a reliable “done” condition in many environments. The DUT may still be draining. The monitor may still be assembling the last packet. The scoreboard may still be waiting for the final expected-versus-actual match. If you drop the objection just because stimulus generation ended, you are often ending the test at exactly the point where the last useful checks are still in flight.

That is also why I am cautious with drain time. Here is the classic pattern:

task run_phase(uvm_phase phase);

phase.raise_objection(this, "Start test");

run_stimulus();

phase.phase_done.set_drain_time(this, 50ns);

phase.drop_objection(this, "Stimulus complete");

endtaskThis is acceptable when the remaining tail is small, deterministic, and understood. It is not acceptable as a replacement for scoreboard completion or response accounting. If your environment only works because 50 ns happened to be enough this week, then you do not really have end-of-test control. You have a timing guess.

Best Practices for UVM Objections

I prefer a small set of rules here because teams remember simple rules better than long methodology documents.

- Keep objection ownership obvious.

- Tie objection drops to real completion criteria, not just “stimulus launched” or “sequence returned.”

- Use descriptive messages in raise and drop calls.

- Use drain time as a cushion, not as your primary completion mechanism.

If I expand just one of those, it is the first one. Clear ownership matters more than cleverness. In most environments, the cleanest answer is that the test owns objections. Sometimes a root sequence owns them. Rarely, a scoreboard participates in a controlled way. Whatever you choose, make it consistent enough that anyone on the team can answer: who decides when this test is done?

Also, use the message argument. I know it is tempting to skip it, but when you are reading objection traces at 2 AM during regression triage, “Starting burst traffic” and “Scoreboard confirmed all bursts checked” are a lot more helpful than silent count changes.

Conclusion

UVM objections make a lot more sense once you stop thinking of them as an API pair and start thinking of them as phase lifetime control.

They exist because UVM testbenches are concurrent. Stimulus, DUT activity, monitoring, and checking do not finish at the same instant, and the framework needs a clean rule for when a task phase is allowed to end. Objections provide that rule. They are the testbench’s shared “keep running” vote.

The practical rule is simple: raise before meaningful work starts, and drop only after real completion criteria are satisfied. Not after the sequence happens to return. Not after a guessed delay. After the environment can honestly say the work is done.

Use objections to control phase lifetime. Do not expect them to replace scoreboard logic, response tracking, or explicit synchronization. Prefer test-level ownership unless there is a clear reason to do otherwise. Use drain time carefully. And when something behaves strangely, turn on +UVM_OBJECTION_TRACE early instead of guessing.

If you want one useful review item after reading this, inspect your current tests and ask three questions. Where are objections raised? Where are they dropped? And are those drop points tied to actual completion, or just to the end of stimulus generation? That small audit catches a surprising number of premature-end bugs and hidden hangs.

Read about UVM factory here: UVM Factory

Read about UVM configuration here: UVM Configuration